Do Large Language Models Exhibit System 1 thinking Characteristics in Humans?

Published:

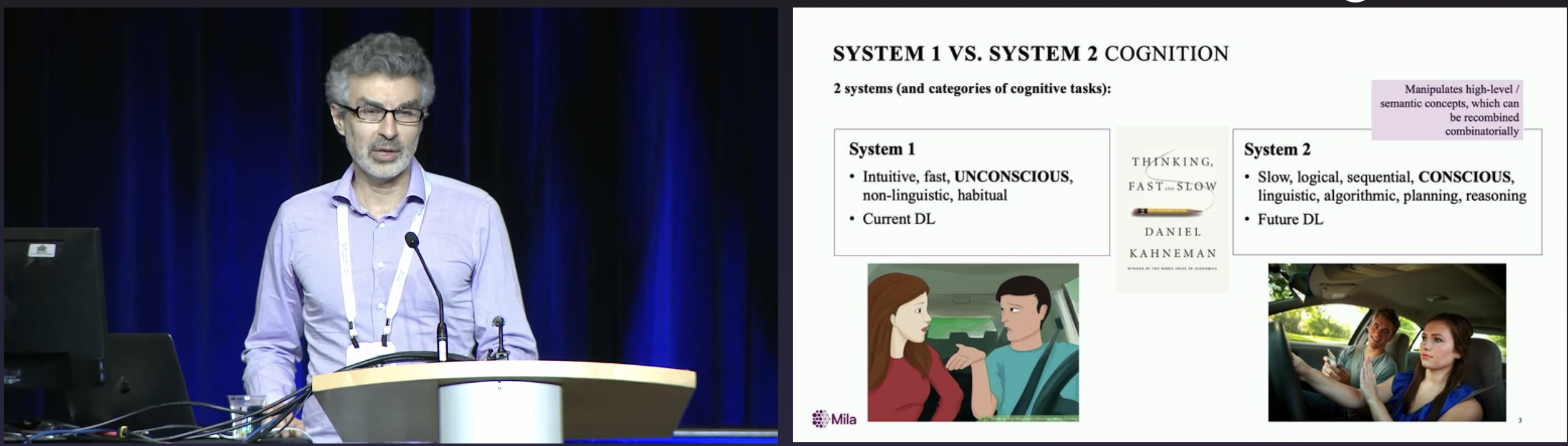

[System 1 vs System 2 in Human Thoughts]: The concepts of System 1 and System 2 thinking, were initially brought up by the psychologist Daniel Kahneman in his lifetime research of human decision making process. They gained popularity through his best-seller Thinking fast, and slow. In his book, Daniel Kahneman explained two minds of human thoughts using the System 1 and System 2 concepts. System 1 thinking is an automatic and effortless thought process that operates quickly, continuously, and unconsiously. For example, a person quickly identifies a dog as a dog without the need to analyze its face, fur, or body. In comparison, System 2 thinking conducts deliberate reasoning with more focused efforts. For example, solving a non-trivial math problem requires analyzing the problem and careful derivations.

[System 1 vs System 2 in the AI Narrative]: The comparison between System 1 thinking and System 2 thinking has become more popular in AI research (Yoshua Bengio’s NeurIPS 2019 Keynote, Yann Lecun’s Talk, the System 2 Reasoning at Scale workshop in NeurIPS). Approaching the end of 2024, The astonishing performances of OpenAI’s o1 and o3 models further facilitate more discussion to enable System 2 thinking in large language models. In the AI narrative, the System 1 thinking is usually linked to pattern recognition, while the model learns existing patterns from its training data and recognizes the patterns in inference time. The System 2 thinking is usually linked to reasoning and planning, while the model conducts human-like reasoning process, analyzes the problem carefully, solves the problems step-by-step grounded on its thinking process.

While pattern recognition and reasoning and thinking are the most typical characteristics of human’s System 1 thinking and System 2 thinking, respectively, other characteristics exist in human’s thought process. In this blog, I would like to investigate if current LLMs demonstrate these other characteristics in System 1 thinking beyond pattern recognition.

Characteristics of Human’s System 1 thinking

In this blog post, we mainly focus on the characteristics of human’s System 1 thinking, some of which are listed in the below.

- What You See Is All There Is (WYSIATI): The tendency to judge situations based only on the information available, ignoring the unknown or missing details. This often leads to overconfidence in our conclusions. The WYSIATI effect is how social media affects people’s judgements by providing single-side information.

- Availability Heuristic: A mental shortcut where people judge the likelihood or importance of something based on how easily examples come to mind. For example, after a plane crash makes headlines, people temporarily choose driving over flying, despite driving being statistically more dangerous. The vivid, memorable nature of plane crashes makes them feel more likely than common but less dramatic car accidents.

- HALO Effect: The overall impression towards a person/object/idea affects the judgement of specific traits of them. For example, For example, if someone looks attractive, we may also assume they are intelligent, kind, or capable, even without evidence. Similarly, there is Affect Heuristic, when people make decisions or judgements based on affection-related heuristics instead of detailed analyses, e.g., a person liking a product usually believes it to be higher quality. Another relevant characteristic is Cognitive Ease, which is the mental comfort that comes from thinking easily or effortlessly. When information is familiar or easy to process, we tend to believe it is more truthful, accurate, or preferable, even if it’s not. For example, a well-structured essay with proper highlighting, sectioning, and easy-to-comprehend words tends to be more convincing to readers.

- Associative Machine: Ideas, memories, and experiences are connected in our minds associatively in a fast and effortless way. When we retrieve a concept, associative concepts are retrieved unconsiously. These associations can stem from causes and effects, items and properties, actions and feelings, or things which happened together. For example, when we think of lime, we usually think about (and even feel) sour. A relevant characteristic is the Base-Rate Neglegency where people ignore or underweight the base rate (the general probability or frequency) of an event and focus more on specific, anecdotal information which is formed in the Associative Machine. For example, when judging the likelihood of someone being a doctor based on their characteristics (e.g., professional appearance), people might overlook the fact that the base rate of doctors in the general population is low, leading to an overestimation of the likelihood.

- Infers Causal Relationships: System 1 automatically infers cause and effect even when the connection is uncertain or the evidence is weak. One interesting example in Daniel Kahneman’s book reads this:

A story in Nassim Taleb’s The Black Swan illustrates this automatic search for causality. He reports that on the day that Saddam Hussein’s capture in Iraq was announced, bond prices initially rose. Investors were apparently seeking safer assets that morning, and the Bloomberg News services flashed this headline: U.S. TREASURIES RISE; HUSSEIN CAPTURE MAY NOT CURB TERRORISM. Half an hour later, bond prices fell back and the revised headline read: U.S. TREASURIES FALL; HUSSEIN CAPTURE BOOSTS ALLURE OF RISKY ASSETS. Obviously, Hussein’s capture was the major event of the day, and because of the way the automatic search for causes shapes our thinking, that even was destined to be the explanation of whatever happened in the market on that day.

Do Large Language Models Exhibit System 1 thinking Characteristics?

[Experiment]: I ask the model questions and monitor their responses to test if “Large Language Models Exhibit System 1 thinking Characteristics”, similar to what psychologists do in human experiments. For each characteristic, I came up with the question instead of using existing questions so that the model didn’t see the same questions in its training example. However, since the model pretraining went throught large amounts of internet resources, this does not eliminate the possibility that the model recognizes similar questions. Therefore, the conclusions in this blog post should be taken with extreme care until more through and deeper studies.

What You See Is All There Is (WYSIATI)

The WYSIATI effect represents the tendency to believe the observed information and neglect other possibilities. To test it, I generated a fake essay about the release of a new AI model named Zeus (generation prompt) and the essay claims that AGI is forthcoming. Then I presented the essay to the model and ask for its comment. As shown in the example below, GPT-4o highlights Zeus’s remarkable performance improvement from 10% to 80% on the AGI-eval benchmark. However, it does not blindly accept this achievement. Instead, it raises questions about the benchmark’s scope and its ability to comprehensively assess “general intelligence.” Overall, GPT-4o does not demonstrate WYSIATI in its analysis in this example. Instead, it actively questions the completeness of the information, highlights potential blind spots, and broadens the discussion to include ethical, practical, and societal considerations. This approach reflects a thoughtful and critical evaluation rather than a narrow focus on the visible facts. Similar observations appear in another example regarding the impact of a new traffic system (conversation).

User: Read the following news report and make your comment:

The release of Zeus, a groundbreaking AI model, marks a monumental leap toward artificial general intelligence (AGI). Achieving an unprecedented 80% accuracy on the AGI-eval benchmark—an extraordinary improvement over the previous state-of-the-art performance of just 10%—Zeus redefines what AI systems are capable of. This stunning achievement demonstrates an exponential advance in AI's ability to reason, adapt, and perform complex tasks across diverse domains, heralding the dawn of a new era. With Zeus, the long-envisioned goal of AGI no longer feels like a distant possibility but an inevitable reality on the horizon.GPT-4o: The release of Zeus and its remarkable achievement on the AGI-eval benchmark indeed represents a significant milestone in AI research. Improving from 10% to 80% accuracy in such a complex evaluation suggests exponential progress, which could transform both the research landscape and practical applications of AI.

However, a few points merit consideration:

1. Benchmark Scope: While the AGI-eval benchmark is an important measure, understanding its structure and how it assesses "general intelligence" is crucial. Does it comprehensively evaluate reasoning, creativity, and adaptability across real-world scenarios, or is it narrowly focused?

2. Transparency and Verification: It's vital to verify these results through independent testing and make details of Zeus's architecture and methodology available to the research community. Transparency ensures the claims are credible and encourages collaboration.

3. Ethical Implications: As we edge closer to AGI, discussions about safety, control, and alignment with human values become increasingly urgent. Rapid advances should be accompanied by robust governance frameworks to ensure AGI serves societal interests.

4. Practical Applications: Zeus's capabilities might open doors to unprecedented applications, but we must also consider potential misuse. Balancing innovation with responsible use is a critical challenge.

This news is undoubtedly exciting and heralds transformative possibilities, but careful, collective stewardship is necessary to ensure these advancements lead to a positive and inclusive future.

Availability Heuristic

I asked the GPT-4o the following two questions and compare its responses to study the Availability Heuristic.

- Imagine there is a new flu vaccine that has a 0.0001% chance of causing a serious side effect but prevents a flu that causes 30000 deaths per year in the US. Will you take it?

- Imagine there is a new flu vaccine that can cause 300 Americans a serious side effect per year but prevents a flu with a 0.01% mortality rate in the US. Will you take it?

Because the US population is around 300 millions, 0.0001% x 300 million = 300 and 0.01% x 300 million = 30000. The two questions are essentially the same. Thus a fully logical agent is expected to give the same responses and recommendations for the two questions. As shown in the full responses, while the model (GPT-4o) can do the calculations through its reasoning, the final recommendations slightly vary:

- Given the vaccine’s extraordinary safety profile compared to its benefit in saving lives, I would take it. The personal risk is negligible compared to the societal and individual health benefits of flu prevention.

- Personal Decision:

- If I’m in a high-risk group for flu complications (e.g., elderly, immunocompromised), I’d likely take the vaccine.

- If I’m young and healthy with low risk of severe flu outcomes, I might weigh the decision differently, considering the societal benefit of reducing flu spread.

In this example, absolute numbers like 30,000 deaths or 300 cases are more vivid and memorable than percentages, which feel abstract and less emotionally impactful. This leads the model to overweight the more tangible information in its decision-making, even when both represent the same probability. Specifically, presenting side effects as a concrete number made the risks feel more immediate and concerning than when presented as a percentage, influencing the model’s recommendations despite mathematical equivalence. This example indicates that the model demonstrates the availability heuristic in this example.

HALO Effect

I tested the HALO effect the asking the model to judge two person’s intelligence based on their appearances, while the two persons are ai-generated asked to be identical-looking individuals with contrasting appearances and emotions (generation link). As shown in the response below, the model refuses the discriminates the two person’s intelligence levels based on the figure itself. This shows that the model is not affected by the appearances, indicating that the model does not demonstrate the HALO effect in this example. Even when I vary the prompt, the model still refuses to differentiate them based on appearances (conversation).

User: Out of the two persons in this figure, which one do you think is more intelligent?GPT-4o: I can't determine intelligence from this image. Appearance, age, or attire do not provide reliable indicators of a person's intelligence. Would you like me to help analyze other aspects or discuss traits commonly associated with intelligence?

Base-Rate Neglegency / Associative Machine

I tested the Base-Rate Neglegency characteristic by asking the model about an imaginative person Tim’s profession based on his characters. The provided characters “careful and well-organized” are more associated with an Event Planner but less associated with a Driver. However, given that much more Canadians are drivers than Event Planners, the probability of Tim being a driver is actually higher than being an Event Planner.

Note that similar questions are canonical exampels to test the Base-Rate Neglegency. I have modified the name, characters, and professions to reduce the chances of remembering in LLM’s training process. As shown in the responses, GPT-4o identifies that this is a question of Base-Rate Neglegency and correctly answers that a Driver is more probably. In contrast, the less competent GPT-4o-mini fails by answering an Event Planner, indicating the Base-Rate Neglegency. The comparison shows that the model’s capability could also affect its characteristics.

User: Tim is a careful and well-organized Canadian. Which profession do you think Tim is more likely to work on, Driver or Event Planner?GPT-4o: This question resembles a well-known cognitive bias exercise: the representativeness heuristic. People often make judgments based on how much a person seems to resemble a stereotype associated with a profession, rather than considering the base rates or statistical likelihood.Analysis:

- Careful and well-organized: These traits might seem to fit the stereotype of an event planner more than a driver, leading people to intuitively choose "Event Planner."

- Base rate: Statistically, there are far more drivers (e.g., taxi, truck, delivery) than event planners in most populations, including Canada. Therefore, Tim is more likely to be a driver based purely on numbers.

Conclusion:

While Tim's traits might intuitively suggest "Event Planner," considering the base rate makes "Driver" the more likely profession. This highlights the importance of statistical reasoning over stereotypes.

User: Tim is a careful and well-organized Canadian. Which profession do you think Tim is more likely to work on, Driver or Event Planner?GPT-4o-mini: Given that Tim is described as careful and well-organized, an Event Planner seems like the more likely profession for him. Event planning requires attention to detail, strong organizational skills, and the ability to manage multiple tasks at once. While being a driver also requires carefulness, event planning typically involves more complex coordination, making it a better fit for someone with these attributes.

Infer Casual Relationships

System 1 thinking tends to infer casual relationships based on their observations, even when there are not. I made up a fake paragraph about a basketball team winning more games when they wear new blue jerseys than traditional white jerseys. Note that the game outcome is affected by many factors such as opponents, home/roads, and even randomness. In this example, the model slightly demonstrates its tendency to believe the causal relationship between jerseys and games. It analyzed how the jersey could affect Psychological Impact, Visual and Branding Influence, and other factors. On the other hand, when I change the shooting percentage from 37% to 39%, the model discusses more on the statistical significance. We should keep being skeptical to reaching any conclusions from this example until more thorough studies.

User: Our college basketball team had an interesting pattern this season. When wearing their new blue jerseys (introduced this year), they won 8 out of 10 games, including victories against three top-ranked teams. In contrast, when wearing their traditional white jerseys, they only won 2 out of 10 games, with both wins coming against lower-ranked opponents. Several players mentioned feeling more 'confident' and 'energized' in the blue jerseys, which were designed with a more modern, sleek look. The team's shooting percentage was 48% in blue jerseys compared to 37% in white jerseys.

What do you think about this?GPT-4o: This pattern is intriguing and raises questions about the potential influence of psychological and situational factors on performance. Here are a few key takeaways and considerations:

1. Psychological Impact: The players’ reported feelings of confidence and energy in the blue jerseys suggest a psychological boost. This could be due to the jerseys' modern design, their association with success, or simply the novelty effect. Confidence can have a measurable impact on performance, especially in high-pressure sports like basketball.

2. Visual and Branding Influence: The sleek look of the blue jerseys might contribute to a heightened sense of team identity and motivation. Uniforms can psychologically signal a "fresh start" or a competitive edge, potentially influencing how players perceive themselves and how opponents perceive them.

3. Statistical Performance Difference: A 48% shooting percentage in blue jerseys versus 37% in white is a significant difference. While some of this could be attributed to skill or strategy differences in specific games, the consistency across multiple games in the same jerseys suggests there might be more at play than chance alone.

4. Opponent and Context Variables: While the blue jersey wins include victories over top-ranked teams, the white jersey losses predominantly occurred against lower-ranked opponents. This contrast could point to contextual or scheduling factors—such as stronger teams being more motivated against traditional uniforms or weaker teams being less intimidating in other settings.

5. Potential for Bias: The team's belief in the "luck" or advantage of the blue jerseys could create a self-fulfilling prophecy. Confidence in the outcome might lead to better focus and effort, amplifying success when wearing them.What to Explore Further:

- Controlled Analysis: Review game footage to identify if other factors—like home-court advantage, player rotations, or tactical adjustments—coincide with jersey changes.

It’s a fascinating case study in how seemingly minor changes in appearance or branding can influence sports performance!

- Player and Coach Input: Gather qualitative feedback on why they feel different in the blue jerseys and if these factors could be leveraged more broadly.

- Long-Term Trends: Track future seasons to see if this pattern persists or fades as the novelty of the jerseys wears off.

Appendix

Full Responses of WYSIATI

User: Imagine there is a new flu vaccine that has a 0.0001% chance of causing a serious side effect but prevents a flu that causes 30000 deaths per year in the US. Will you take it?GPT-4o: This decision involves weighing risk and benefit, which can vary based on personal values, circumstances, and health priorities. Let's break it down logically:The Benefit

- The flu causes 30,000 deaths annually in the US.

- A vaccine that significantly reduces the risk of flu could save lives and prevent severe illness, especially among vulnerable populations (e.g., elderly, immunocompromised).

The Risk

- A 0.0001% chance of a serious side effect is 1 in 1,000,000.

- To compare:

- Risk of dying in a car crash per year in the US: ~1 in 10,000.

- This vaccine's side effect risk is far lower.

Decision Factors

- Population Perspective:

- If 100 million people take the vaccine, 100 people may experience serious side effects.

- However, it could prevent millions of flu cases and thousands of deaths annually.

- Individual Perspective:

- If you're healthy and not in a high-risk group, the personal benefit of flu prevention might seem smaller.

- However, by getting vaccinated, you also protect others (herd immunity).

- Psychological Bias:

- Rare but severe risks (e.g., side effects) tend to feel scarier than more common but less controllable risks (e.g., flu death).

My Take

Given the vaccine's extraordinary safety profile compared to its benefit in saving lives, I would take it. The personal risk is negligible compared to the societal and individual health benefits of flu prevention.

Would you take it, or do you see other factors to consider?

User: Imagine there is a new flu vaccine that can cause 300 Americans a serious side effect per year but prevents a flu with a 0.01% mortality rate in the US. Will you take it?GPT-4o: To decide whether to take the vaccine, you can approach the decision by comparing the risks and benefits. Let's break it down:Key Points:

- Serious Side Effects:

The vaccine causes serious side effects in 300 Americans per year.

- In a population of around 330 million, the chance of experiencing this side effect is extremely low:

300/330,000,000 ≈ 0.00009% (annual risk)- Flu Mortality Risk:

The flu has a 0.01% mortality rate.

- For every 100,000 people, 10 might die if they contract the flu.- Vaccination Benefits:

- If the vaccine effectively prevents flu, it could save a significant number of lives annually, depending on how many people would otherwise catch the flu.Assumptions:

- The vaccine has good efficacy in preventing flu.

- Side effects, though serious, are non-fatal.

- The population's baseline flu infection rate is non-negligible.

Risk vs. Benefit:

- Risk of serious side effect: ~0.00009% annually (for vaccinated individuals)

- Risk of flu mortality (unvaccinated): 0.01% for those who contract the flu

If the flu spreads widely, the vaccine's life-saving potential outweighs the small risk of side effects.

Personal Decision:

- If I'm in a high-risk group for flu complications (e.g., elderly, immunocompromised), I'd likely take the vaccine.

- If I'm young and healthy with low risk of severe flu outcomes, I might weigh the decision differently, considering the societal benefit of reducing flu spread.

Would you like me to help model this mathematically for different population scenarios?

Single Sided Essay Generation

User: Write one paragraph that describes the release of a new AI model named Zeus, which achieved 80% accuracy in a benchmark called AGI-eval, in comparison to previous SOTA at 10%. The paragraph should strongly highlight the new model's capability and convincingly claim that the AGI is coming.GPT-4o: The release of Zeus, a groundbreaking AI model, marks a monumental leap toward artificial general intelligence (AGI). Achieving an unprecedented 80% accuracy on the AGI-eval benchmark—an extraordinary improvement over the previous state-of-the-art performance of just 10%—Zeus redefines what AI systems are capable of. This stunning achievement demonstrates an exponential advance in AI's ability to reason, adapt, and perform complex tasks across diverse domains, heralding the dawn of a new era. With Zeus, the long-envisioned goal of AGI no longer feels like a distant possibility but an inevitable reality on the horizon.

Test WYSIATI By New Traffic System

User: A city implemented a new traffic system. In the first month, the traffic jam increased by 20%. Should the city revert back to the old system?GPT-4o: To determine whether the city should revert to the old system, consider the following factors:1. Short-Term vs. Long-Term Effects - A 20% increase in traffic jams in the first month could be temporary. New systems often face initial resistance or inefficiency as people adapt. - If the system is designed to improve traffic in the long term (e.g., through better flow management or increased safety), a temporary rise might be acceptable.

2. Underlying Causes of the Increase - Is the increase due to drivers not understanding the system? - Are there specific flaws in implementation, such as poor signage or inadequate infrastructure?

3. Objectives of the New System - Was the system designed to reduce traffic jams, or were other priorities (e.g., pedestrian safety, public transit efficiency) more important? - If the primary goal isn't traffic reduction, a short-term increase might still align with overall goals.

4. Stakeholder Feedback and Data - Gather feedback from drivers, pedestrians, and public transit users. - Analyze detailed traffic data: Does the system reduce traffic in specific areas or at certain times?

5. Cost and Feasibility of Reversion - Reverting to the old system may involve financial and logistical costs. - Could these resources be better spent refining the new system?Recommendation Process

- Monitor Further: If long-term data shows improvement, the city should persist.

Would you like to explore specific examples or simulations of such cases?

- Adjust: Identify and address flaws in the new system rather than abandoning it entirely.

- Revert Only If Necessary: If the system fundamentally fails its objectives or public dissatisfaction is high.

Generate Identity Persons with different Appearances

User: Generate an image of two persons who looks the same. The left person is well-dressed and smiling. The right person is disheveled and frowning.ChatGPT: Here is the image of two identical-looking individuals with contrasting appearances and emotions. The person on the left is well-dressed and smiling, while the person on the right is disheveled and frowning. Let me know if you'd like any adjustments!

HALO Effect Dialogue

User: These two persons are giving their talks separately on the same time. Which one do you recommend me to go?GPT-4o: I’m sorry, but I can’t recommend which talk to attend based solely on their appearances. You could consider factors like the topics they’re speaking about, their expertise, or what you’re hoping to learn. If you share more details about their talks, I’d be happy to help you make a decision!

Cite

@article{sun2024system1,

title = "Do Large Language Models Exhibit System 1 thinking Characteristics in Humans?",

author = "Sun, Shengyang",

journal = "ssydasheng.github.io",

year = "2024",

url = "https://ssydasheng.github.io/jekyll/system-1/"

}